In today’s digital age, the internet is a double-edged sword for the children in our lives. It offers an infinite repository of knowledge and entertainment but also poses significant risks, from inappropriate content to online predators. To help navigate these challenges, a burgeoning industry has emerged, using artificial intelligence to verify users’ ages and shield minors from the web’s darker corners. However, while these AI-age scanners promise enhanced safety, they also raise critical privacy and ethical concerns that need addressing.

The AI Age Verification Boom

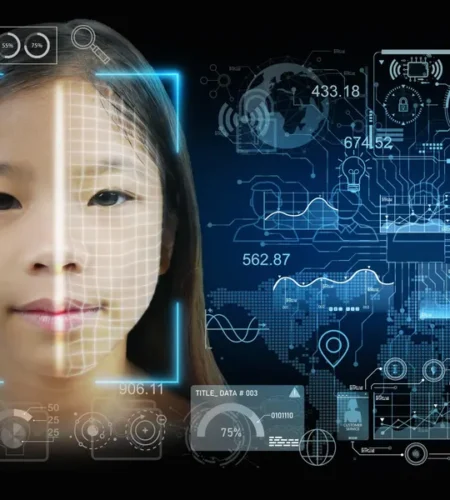

Imagine this: a child wants to create an account on a social media platform. Instead of just entering a birth date, they now have to take a live “video selfie” while holding up a government ID. This is the new frontier of “age assurance” technology, where AI algorithms analyze facial features to estimate a user’s age accurately. Companies like Yoti, Incode, and VerifyMyAge are leading this charge, providing tools that scrutinize millions of faces annually to keep underage users out of restricted areas online.

The Promise of Protection

Proponents of AI age verification argue that these tools offer parents greater peace of mind. By ensuring that only users of appropriate ages can access certain content, these technologies could potentially mitigate the risks associated with children’s online activities. Major platforms like Facebook, Instagram, and TikTok have already integrated age-check tools to restrict access for their youngest users. Even OpenAI’s ChatGPT employs similar mechanisms to comply with age-related regulations.

In theory, this creates a safer online environment for kids. It reduces their exposure to harmful content and inappropriate interactions, aligning with recent legislative pushes across 19 U.S. states requiring online age checks. This surge in legislative activity reflects growing societal concerns about the internet’s impact on youth, a sentiment echoed by the U.S. Surgeon General’s call for warning labels on social media platforms due to their potential mental health risks.

The Privacy Trade-Off

However, the implementation of AI age verification is not without significant drawbacks. For one, the very nature of these systems requires the collection and analysis of vast amounts of personal data, including facial scans and government IDs. Critics argue that this level of surveillance is unprecedented and poses severe privacy risks. The potential for data breaches, hacking, and misuse of sensitive information is a considerable concern.

Moreover, the accuracy of these tools can vary. While federal tests have shown that age estimators can be precise, they are not foolproof. Factors such as facial expressions, eyeglasses, and even certain demographic characteristics can affect the results, leading to errors. For instance, women and girls often experience higher error rates, which can result in wrongful access denials. This not only frustrates legitimate users but also raises questions about the fairness and reliability of these technologies.

Ethical and Social Implications

Beyond privacy, there are broader ethical and social implications to consider. Age verification tools, while designed to protect, can inadvertently contribute to a culture of surveillance. As these systems become more widespread, they could normalize intrusive monitoring practices, eroding trust and personal freedoms.

Additionally, the implementation of age checks could disproportionately affect marginalized groups. Individuals with disabilities or those lacking proper identification might find themselves unjustly barred from accessing services. Furthermore, the use of such technologies by governments and private companies could lead to overreach, with laws potentially being used to censor or restrict access to information based on subjective criteria.

Navigating the Future

The debate over AI age verification is emblematic of the broader challenges we face in the digital age: balancing safety, privacy, and ethical considerations. As these technologies continue to evolve, it is crucial to develop robust frameworks that protect users’ rights while addressing legitimate safety concerns.

For lawmakers, this means crafting regulations that ensure transparency and accountability in how these tools are used. For technology companies, it involves prioritizing data security and user consent, and for society at large, it necessitates ongoing dialogue about the ethical use of AI.

Conclusion

AI age scanners represent a significant advancement in online safety for children, providing tools that can help shield young users from harmful content. However, the path to implementing these technologies is fraught with challenges, particularly around privacy and ethics. As we move forward, it is essential to strike a balance that protects children while respecting the privacy and rights of all users. Only through careful consideration and responsible innovation can we create a digital landscape that is both safe and fair for everyone.

In the end, safeguarding our children online should not come at the expense of our privacy. By working together, we can develop solutions that uphold the values of trust and security, ensuring a better and safer internet for future generations.